In this post, Andreas Bengtson and Kasper Lippert-Rasmussen discuss the article they recently published in Ergo. The full-length version of their article can be found here.

Can you imagine justice being achieved without democracy being realized? The view we are concerned with in this paper—relational egalitarianism—cannot. It says that justice requires that people relate as equals, and that democracy is valuable because it is a necessary, or constituent, part of relating as equals. This is a popular view these days.

Unfortunately, relational egalitarianism runs into a dilemma: either it is plausible as an answer to what makes democracy valuable but implausible as a theory of justice, or it is plausible as a theory of justice but implausible as an answer to what makes democracy valuable.

What makes this dilemma obtain? Relational egalitarianism is a claim about how social relations should be: they should be equal, as opposed to unequal. This means that a precondition for the view is that social relations exist. However, relational egalitarians have not said much about how they understand social relations.

There are different routes relational egalitarians could take here. One option is to go for a moralized view of social relations. For X and Y to be socially related on such a view, they must be able to treat each other in objectionable ways—e.g. in a racist, sexist or exploitative sort of way.

Another option is to go for a non-moralized view. On this view, you have a lexical account of what we mean when we say that two people are socially related. Such a view, we argue, puts interaction or communication front and center: if X and Y can interact or communicate, they are socially related.

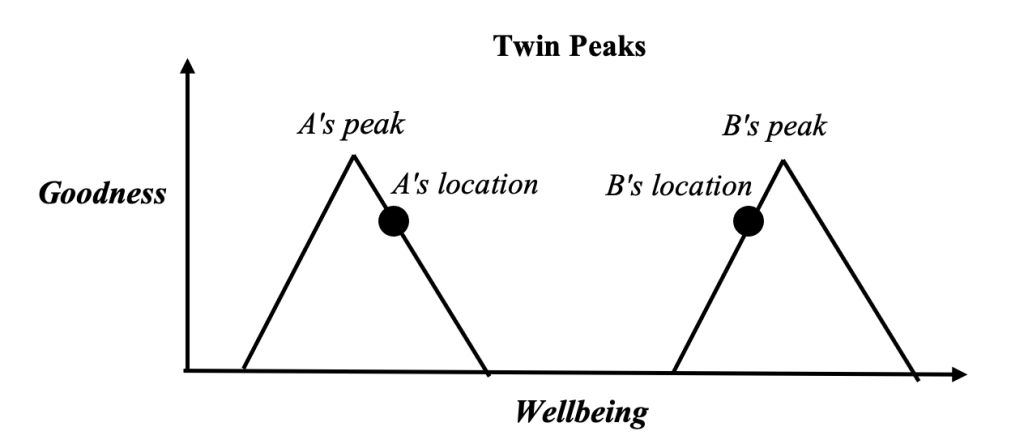

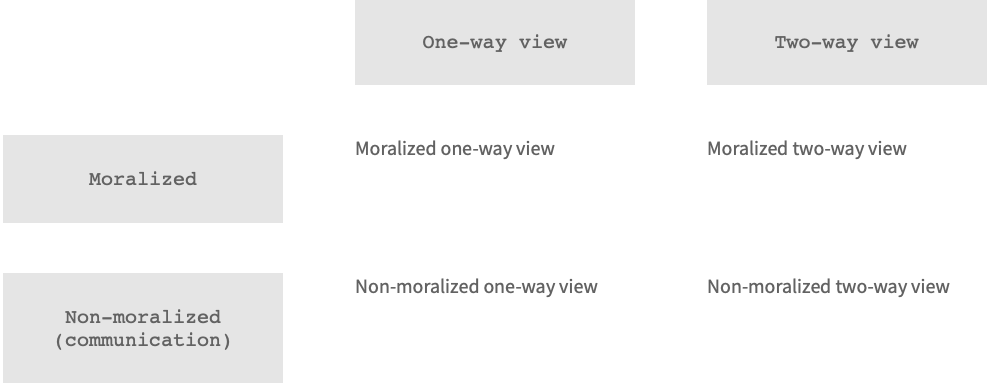

The moralized and the non-moralized views come in both a one-way and a two-way version. In the one-way version, it suffices that either X or Y satisfies the requirements (e.g., it suffices, for X and Y to be socially related, that X can communicate with Y). In the two-way version, both parties need to satisfy the requirements (e.g., for X and Y to be socially related, it must be the case both that X can communicate with Y, and that Y can communicate with X). This gives us the following four possible views of social relations:

We argue that the view of social relations to which the relational-egalitarian needs to appeal in order to make relational egalitarianism plausible as a theory of justice – the moralized one-way view – also makes relational egalitarianism implausible as an answer to what makes democracy valuable.

Consider:

Sexist Son: The parents, a father and a mother, of a thirty-year-old man suddenly die in a car crash which leaves the son with the task of handling their wills. He decides to abide by his father’s will (to publish his book manuscript) but not abide by his mother’s will (to publish her book manuscript) solely because he is a very old-fashioned sexist who believes that women should not write books and feels strong revulsion at the thought of his mother publishing a book.

Relating to another in a sexist way is a paradigmatic injustice according to relational egalitarianism. Thus, it is important, for relational egalitarianism to be a plausible theory of justice, that it can condemn sexism as unjust.

Sexist Son is a case of the son relating to his parents in a sexist way. But the only way in which Sexist Son can be condemned as unjust is if there exists a social relation between the son and his mother (since, as we pointed out, for a social relation to be unequal and therefore unjust requires that there is a social relation to begin with). And only the Moralized One-way View implies that the son and his mother are socially related. Thus, in order for the view to be plausible as a theory of justice, relational egalitarians must adopt the Moralized One-way View of social relations.

But this creates trouble for the part of the view that claims that democracy is valuable because it is constitutive of equal relations. The reason is that one important requirement on any view of the value of democracy is that it provides a plausible solution to the boundary problem—i.e., the problem of determining who should be included in the demos.

Now, on the relational egalitarian view, those who are socially related should be included in the demos. But if we employ the Moralized One-way View of social relations, then currently living people and dead people are socially related, which means that dead people should be included in contemporary democratic decision-making.

It is a mainstay in democratic theory and history that democratic inclusion of dead people cuts against the egalitarian core of democracy. Indeed, many think that such inclusion amounts to government ‘by the dead hand of the past.’ Thus, adopting the Moralized One-way View makes relational egalitarianism implausible as a view of the value of democracy.

Hence the dilemma: making relational egalitarianism plausible as an answer to what makes democracy valuable comes at the price of making relational egalitarianism implausible as a theory of justice, and vice versa.

Is there a way out of the dilemma? Arguably, the least costly way out is to say that relational egalitarianism is not a unified theory, but it is rather a disjunct of two different ideals of how social relations ought to be—one focused on justice, the other focused on democracy.

Want more?

Read the full article at https://journals.publishing.umich.edu/ergo/article/id/7305/.

About the authors

Andreas Bengtson is Associate Professor at the Centre for the

Experimental-Philosophical Study of Discrimination at Department of

Political Science, Aarhus University. He has published on issues such

as affirmative action, democracy, discrimination, paternalism and

relational equality. His work has appeared in journals such as

American Journal of Political Science, British Journal of Political

Science, Free & Equal, Journal of Ethics and Social Philosophy,

Journal of Political Philosophy, Journal of Moral Philosophy, and

Politics, Philosophy & Economics.

Kasper Lippert-Rasmussen (D.Phil.) is professor at Aarhus University

(AU), Denmark. He is the director of the Centre for the

Experimental-Philosophical Study of Discrimination at AU. He has

published widely in ethics and political philosophy. Recent books

include Wrongful Discrimination (CUP) and The Beam and the Mote (OUP).

Presently, he is working on two monographs: Poverty Discrimination

(under contract with CUP; with Bastian Steuwer) and Discrimination

Against the Aged.